COMMENTARY ON HOT TOPICS

Colonoscopy is widely considered to be the most effective tool for colorectal cancer (CRC) screening. However, recent studies suggest that discrepancies in the quality of colonoscopy are the cause of uneven outcomes in CRC detection and prevention. As a result, clinical researchers, professional societies, and governmental policy-makers have sought to identify benchmarks for quality colonoscopy. The recent article by Filip et al[1] represents an important contribution to this ongoing effort to delineate the key aspects of high quality colonoscopic examination. Furthermore, it brings into focus the salient questions which define the current debate about quality improvement in screening colonoscopy.

What is quality colonoscopy?

To address this question, the American College of Gastroenterology and the American Society for Gastrointestinal Endoscopy in 2006 developed guidelines establishing quality indicators for colonoscopy. Outlining intra-procedural standards for colonoscopy, these guidelines establish a withdrawal time (WT) ≥ 6 min, and a cecal intubation rate of ≥ 95% as quality indicators[2]. However, the most important benchmark is an adenoma detection rate (ADR) of ≥ 25% in men and ≥ 15% in women for average risk screening colonoscopy[3]. European guidelines concur with this observation and outline a goal ADR of 20% for average-risk colorectal screening in patients over the age of 50[4]. ADR was chosen as the primary quality indicator because the main benefit of colonoscopy, the detection and removal of neoplastic lesions has been estimated to prevent 76%-90% of CRCs[5-7]. While more recent studies suggest that the polyp detection rate (PDR) which includes the detection of non-adenomatous polyps (hyperplastic polyps) can be used as a surrogate for ADR, ADR remains the principal quality indicator for colonoscopy[4,8,9].

Do we perform quality colonoscopy?

Over the last decade, colonoscopy has been increasingly utilized as the primary modality for CRC screening in the United States, with a 14% increase in use among Medicare recipients from 2000-2003[10]. However, recent evidence suggests that the increase in colonoscopy utilization has not uniformly resulted in a concomitant reduction in CRC-related morbidity and mortality. In a case control study, Baxter et al[11] demonstrated that screening colonoscopy decreased overall CRC-related mortality [odd ratio (OR) 0.69, 95%CI: 0.63-0.74] and left-sided CRC-related mortality (OR 0.69, 95%CI: 0.28-0.39). However, alarmingly, the study found that colonoscopy did not significantly decrease the risk of death from right-sided CRC (OR 0.99, 95%CI: 0.86-1.14)[11]. This finding was remarkable given that it questioned the long-standing presumption that colonoscopy was superior to other CRC screening modalities primarily through its ability to detect right-sided neoplasms.

The relationship between the use of colonoscopy and its variable impact on CRC prevention is further elucidated by data on missed CRCs from the Manitoba cancer registry[12]. Defining a missed cancer as a CRC occurring within 6-36 mo of colonoscopy, the investigators found that nearly 1 in 13 CRCs were likely missed on initial colonoscopic examination[12]. Furthermore, risk factors for missed CRCs included colonoscopy with polypectomy, and proximal location, thus potentially implicating failed cecal intubation and the incomplete resection of polyps as potential causes[12].

The importance of missed proximal colonic polyps is highlighted by the emerging recognition of sessile serrated adenomas (SSA) as distinct colonic neoplasia with malignant potential. Histologically marked by disorganized and distorted crypt patterns, SSA tend to be proximal in location and to appear as flat or depressed lesions that are easily missed without careful examination[13]. The potential association between missed CRCs and these lesions is significant in that SSA have been found to carry an increased risk of proximal CRC (OR 4.79, 95%CI: 2.16-5.03)[14].

The most compelling evidence linking the quality of colonoscopy to CRC prevention outcomes comes from a study by Kaminski et al[15] which examined endoscopists’ ADR and the risk for interval CRC after colonoscopy. In comparing endoscopists with mean ADR of < 11% vs those with ADR of > 20%, the investigators found a cumulative hazard rate for the development of interval CRC of 10.94 (95%CI: 1.37-87.01)[15]. In a Cox proportional hazards regression model, endoscopists’ ADR along with the patients’ age were the only independent predictors of interval CRC[15]. This study, along with the others previously discussed, strongly suggests that quality colonoscopy is not uniformly performed. Furthermore, it highlights the potential adverse impact of poor quality colonoscopy when it comes to CRC prevention.

Are endoscopists to blame for poor quality colonoscopy?

While factors such as poor bowel preparation, and patients’ genetic predisposition for colorectal neoplasia have been implicated in missed neoplasia and the development of CRC between colonoscopies, the preponderance of evidence points to the role of the endoscopist in determining the quality of colonoscopy[16]. In a study of over 10 000 colonoscopies, Chen et al[17] found a high degree of variability in mean ADR ranging from 14% to 34.6% among 9 endoscopists. In a multi-variable analysis, the identity of the endoscopist was found to have a similar impact on ADR as patient age and gender[17]. In a separate study involving missed polyps found on tandem colonoscopy (back-to-back colonoscopies performed to assess for missed lesions), Rex et al[18] found similar variability among participating endoscopists with adenoma miss rates ranging from 17% to 48%. Other factors related to the identity of the endoscopist such as medical specialty (gastroenterologist vs non-gastroenterologist), and training level have also been implicated as having an impact on ADR[12,19,20]. Consequently, it is clear that factors related to the individual endoscopist have a large impact on the quality of colonoscopy.

Is quality colonoscopy a matter of time?

The debate over quality colonoscopy has largely centered on the issue of colonoscopy WT or the amount of time inspecting the colonic mucosa for neoplastic lesions. This is largely due to the landmark paper by Barclay et al[21] which compared ADR among endoscopists with varying WT. Defining WT as the time from cecal identification to withdrawal of the scope from the anus, the investigators found that endoscopists with WT ≥ 6 min had higher ADR compared to those with WT < 6 min (28.3% vs 11.8%, P < 0.001)[21]. In a similar retrospective study of over 10 000 colonoscopies, Simmons et al[22] found that prolonged WT was associated with higher PDRs (r = 0.76, P < 0.001) and that overall median polyp detection corresponded to a WT of > 6.7 min.

However, since the publishing of these initial studies, efforts at quality improvement by simply mandating a minimal WT have largely proven to be unsuccessful in significantly improving ADR. In a study by Sawhney et al[23] the establishment of a mandatory WT of ≥ 7 min produced a significant increase in the compliance rate for WT from 65% to 100%. However, in spite of this, there was no concomitant increase in the PDR (slope 0.0006, P = 0.45)[23]. Similar studies involving continuous feedback regarding mean WT to endoscopists have also been disappointing in producing significant increases in ADR[24].

One potential explanation for these findings is the possibility that there may be a ceiling to the degree of improvement in ADR that can be achieved by simply prolonging WT. This was well illustrated by retrospective data from the VA cooperative study where the mean WT was well above 12 min[25]. While mean WT was associated with initial adenoma detection, it did not correlate with the probability of finding interval neoplasia on surveillance colonoscopy (P = 0.61)[25]. A similar finding was found in a German study where WT did not correlate with variability in ADR when the mean WT ranged from 6-11 min[26]. Given these observations, there is clear cut evidence that while WT is certainly an important performance parameter, it may not necessarily be the deciding factor in determining the overall quality of colonoscopy.

Is colonoscopy a matter of technique?

Along with the speed of withdrawal, recent attention has focused upon the technique that is used to examine the colonic mucosa for neoplasia. The first study to examine this by Rex et al[27] compared two endoscopists with markedly different adenoma miss rates found in a separate tandem colonoscopy study. Using video-recordings of colonoscopy withdrawals and a 5 point scale to grade the quality of withdrawal technique, the investigators found that the endoscopist with the lower adenoma miss rate (17%) had higher scores for all aspects of withdrawal (distension, cleansing, time spent viewing, examination of proximal aspects of folds) compared to the endoscopist with the highest adenoma miss rate (48%)[27].

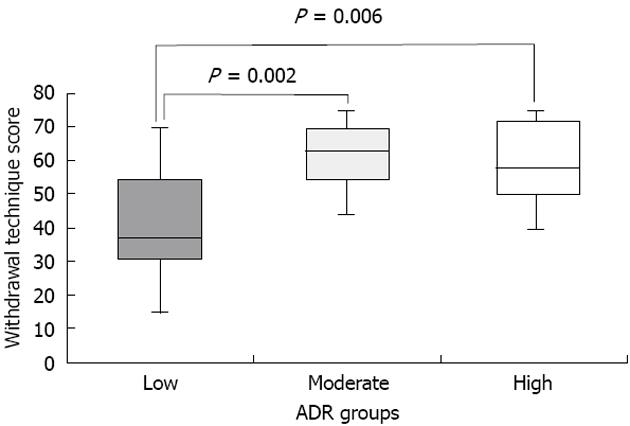

Our research team recently further elucidated the potential relationship between WT and withdrawal technique among a broader set of endoscopists from varying institutions (11 endoscopists from 2 Veterans Affairs Hospitals and 3 University Hospitals)[28]. A video-recording protocol and grading system was utilized to characterize withdrawal technique and WT of endoscopists with low (11.8% ± 3.4%), moderate (34.1% ± 2.6%) and high ADR (49.0% ± 3.7%)[28]. Withdrawal technique was assessed using a scale adapted from Rex et al[27] that assigned points (0-5) for three specific dimensions: (1) fold examination (0 = not looking behind folds, 5 = look behind all folds); (2) distension (0 = not cleaning pools, 5 = cleaning all pools); and (3) cleansing (0 = not distended or in spasm, 5 = good distension). Scores for each dimension were assigned for 5 areas of the colon (cecum, ascending, transverse, descending, sigmoid). Only colonoscopies performed for average-risk CRC screening in which cecal intubation was achieved were evaluated. Using this scoring system, we found that High and Moderate ADR endoscopists had higher withdrawal technique scores compared to low ADR endoscopists (Figure 1)[28]. Furthermore, when the highest and lowest ADR endoscopists were compared, we did not find a significant difference in WT (6.6 ± 1.7 min vs 7.4 ± 1.7 min) (P = 0.36), but did find a nearly 2-fold difference in technique score (36.2 ± 9 vs 61 ± 9.9, P = 0.0001)[28]. One potential explanation for this was the possibility that low ADR endoscopists purposely slowed down the speed of withdrawal to meet the 6 min goal but nonetheless failed to perform a high level of quality withdrawal technique.

Figure 1 Withdrawal technique scores among endoscopists with low, moderate and high adenoma detection rates.

ADR: Adenoma detection rate.

The importance of withdrawal technique was also recently highlighted by a quality improvement study by Barclay et al[29]. Unlike the Sawhney study[23] which solely focused upon a minimal WT, the quality improvement protocol utilized by Barclay et al[29] included both a WT mandate and an institution-wide meeting among endoscopists that established guidelines on optimal withdrawal technique. Following this two-pronged approach, the investigators demonstrated an improvement in ADR (37.8% post-intervention vs 23.5% pre-intervention, P < 0.0001) and a higher number of advanced neoplasia per patient screened[29].

The development of newer techniques for mucosal inspection also holds great promise for efforts to enhance the quality of colonoscopy. East et al[30] recently showed that the use of dynamic changes in patient position during withdrawal resulted in a mean ADR of 52% compared with an ADR of 34% (P < 0.001) in cases where withdrawal was only performed while the patient was in the left lateral decubitus position. The use of large volume water immersion during colonoscopy along with water exchange to remove residual stool may improve mucosal visualization, with a recent meta-analysis showing an increased detection of right-sided adenomas when using this technique[31]. Finally, utilizing the concept of the Hawthorne effect, which describes the phenomenon in which individuals often will perform better when they know that they are being monitored, Rex et al[32] have demonstrated that the simple act of video-recording the procedure results in improved WT and withdrawal technique.

Is quality colonoscopy a matter of technology?

Innovations in endoscope development and imaging have shifted the focus towards finding a technological solution to the task of ensuring quality colonoscopy. One of the earliest methods to be applied to the goal of maximizing adenoma detection is chromoendoscopy. Using a spray catheter to coat the lining of the colonic mucosa with either methylene blue or indigo carmine dyes, this approach enhances colonic pit patterns and demarcates the border between normal and abnormal mucosa. Because of its ability to differentiate flat adenomas, a recent meta-analysis has demonstrated that chromoendoscopy is associated with a higher ADR (OR 1.67, 95%CI: 1.29-2.15) and a higher detection rate for ≥ 3 neoplastic lesions (OR 2.55, 95%CI: 1.49-4.36) compared to white-light endoscopy (WLE)[33]. Furthermore, Stoffel et al[34] conducted a study where patients underwent either chromoendoscopy or WLE as the second part of a tandem colonoscopy study. Here, they found that chromoendoscopy detected a higher percentage of missed adenomas (44% vs 17%, P = 0.04) even when controlled for WT[34]. Given these results, the investigators conclude that the higher ADR seen with chromoendoscopy is due to the method itself rather than as a consequence of the endoscopist having to take a longer time in inspecting the colon[34].

While current evidence suggests that chromoendoscopy does result in higher ADR, the method is time-consuming and requires additional equipment. Consequently, modalities that rely upon imaging that is built into the processor of the colonoscope have been examined as a means of maximizing ADR. Narrow band imaging (NBI) is the most widely available technology utilizing short wave-length light that is primarily absorbed by hemoglobin in the superficial mucosa[35,36]. Highlighting mucosal pit patterns and vascularity, NBI offers the ability to potentially differentiate abnormal from normal mucosa with the simple press of a button on the colonoscope. However, a systematic review of both observational and clinical trials recently demonstrated that NBI did not result in higher ADR compared with WLE (OR 1.19, 95%CI: 0.86-1.64). Furthermore, NBI did not yield a higher number of adenomas per patient (relative ratio of means 1.23, 95%CI: 0.93-1.61)[37]. While other evidence suggests that NBI has sufficient sensitivity and specificity in differentiating adenomatous from non-adenomatous tissue to potentially give rise to a resect and discard strategy for colonic polyps, current data does not support its use as a means of enhancing ADR[37].

Another potential imaging modality that has been proposed to increase ADR is auto-fluorescence imaging (AFI). AFI relies upon the observation that the colonic mucosa emits auto fluorescent light in response to illumination by ultraviolet light[38]. Furthermore, the wavelength of the auto fluorescent light is dependent on architecture, light-absorptive properties and the metabolic status of the tissue that is being illuminated[38]. Exploiting this capability, AFI has been characterized as a potential “red-flag” technology that would warn the endoscopist to carefully inspect an area where a flat neoplastic lesion is located.

Preliminary studies which have examined the relationship between AFI and adenoma detection have thus far proven to be disappointing. In a head to head study of AFI vs high resolution endoscopy (HRE), van den Broek et al[39] found no significant differences in adenoma miss rates (29% vs 20%, P = 0.35). In a study examining the use of tri-modal imaging (AFI plus NBI plus HRE), Kuiper et al[40] found an ADR that was virtually the same as that seen with standard WLE (34% vs 37%, P = 0.61).

Other technologies on the horizon which hold promise include the third-eye retroscope (Avantis Medical Systems, Sunnyvale, California) which allows for the retrograde visualization of neoplastic lesions behind mucosal folds. In a tandem colonoscopy study, Siersema et al[41] recently showed that the Third Eye system resulted in a lower adenoma miss rate when compared with WLE. While these results are promising, the broad preponderance of the evidence regarding new technologies suggests that technology by itself cannot guarantee quality in colonoscopy.

The way forward for quality colonoscopy

Given the world-wide economic challenges surrounding health care delivery, governments and third party payers are placing a renewed focus on policies that provide the most cost-effective approach towards disease prevention. As part of this trend, quality benchmarks for colonoscopy stand as obvious targets for Pay-For-Performance measures that seek to reward patient-oriented outcomes in CRC screening. Faced with this imperative, the endoscopic community will need to find innovative approaches to measuring and improving the quality of colonoscopy.

The review conducted in this paper illustrates that there probably is not just one solution to the dilemma of ensuring quality in colonoscopy. While recent studies have clearly demonstrated that the amount of time spent examining the mucosa is a vital component, evidence from quality improvement programs suggest that there is likely a ceiling effect to WT. Furthermore, while withdrawal technique does have a definitive impact on ADR, measuring the quality of technique is time-consuming, burdensome and not easily performed. Finally, given the mixed results of technological solutions for enhancing ADR, it is clear that further work must be done to integrate these advances into everyday practice.

The quest to preserve colonoscopy as the primary tool for quality CRC screening demands a multi-pronged approach using research and innovation to enhance all three aspects of colonoscopy. By providing a potential means for easily quantifying the quality of withdrawal technique, the paper by Filip et al[1] stands as an important contribution to this process. Similar research endeavors are clearly required to achieve the goal of ensuring quality in colonoscopy.